Where Does the Bridge Get Built?

This is the third essay in the From Spark to System series. Part I: “Why the Real AI Revolution Hasn’t Happened Yet.” Part II: “The Bridge That Was Never Built.”

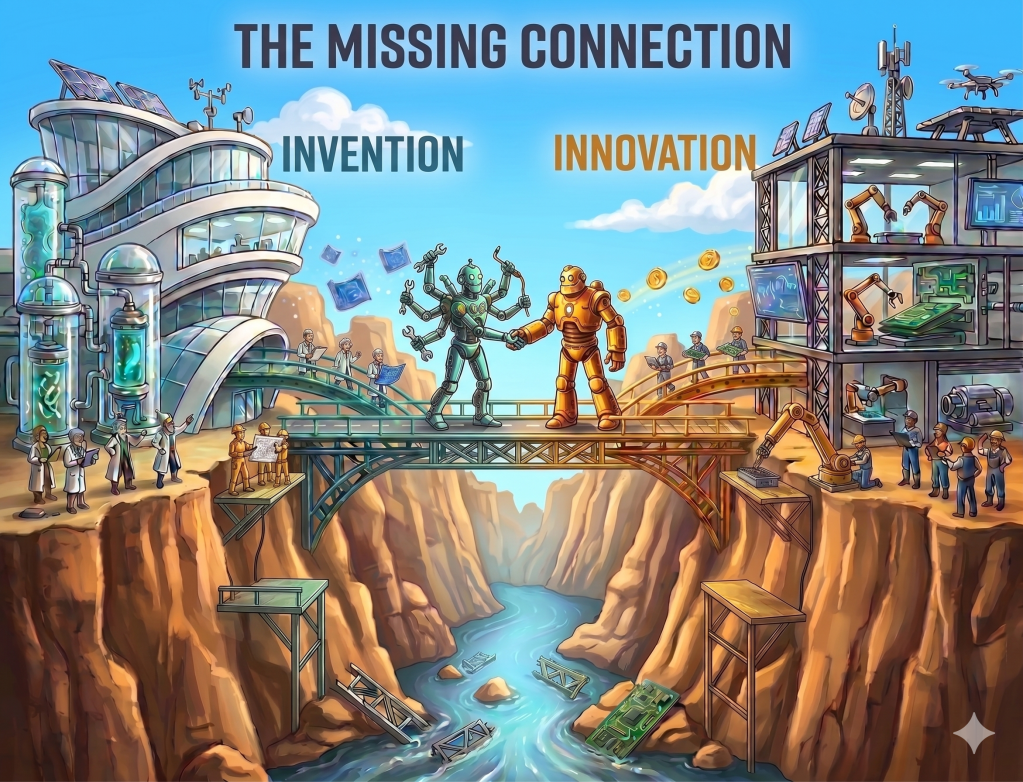

The previous two posts in this series built a framework. The first argued that the AI revolution most people are anticipating has not yet happened because the supporting infrastructure, what W. Brian Arthur calls the domain, is still being assembled. The second argued that the gap between what is technically possible and what adds value to the world is not just a domain-completion problem, but a translation problem. Without translation, inventions die before reaching the real world. Translation, however, requires organizational conditions and leadership that most institutions do not cultivate.

What I want to do in this third post is apply that framework to the organizations actually shaping the AI landscape right now: the research laboratories, the technology platforms, and the enterprise ecosystem struggling to deploy AI at scale. I have spent a long time working in and around these environments, in research labs, in startups, in large technology companies, and in the enterprise space. I am not a neutral observer. But I will call things as I see them, based on publicly available announcements and literature. Readers who work inside the organizations I discuss should feel free to disagree with my assessments: they have access to information I do not, and insider perspective almost always enriches the picture that public sources paint. I welcome that disagreement, and I mean these observations as the beginning of a conversation, not the final word.

I am not going to rank organizations or predict outcomes. As someone once said, predicting the future is difficult, especially ahead of time. The field is moving faster than any previous technological revolution, and too much remains unresolved for predictions to be useful. What I can offer is a perspective on where the phases of the innovation arc, based on the framework I developed in the previous posts, are currently being executed, where the gaps are, and what that means for where the most important work is actually happening.

The Invention Layer: Where the Domain Is Being Pushed

The organizations doing the most consequential AI invention today share a feature with Bell Labs and Xerox PARC: they are environments where the primary measure of success is the quality and novelty of the research, not the speed of its commercial application. The cases I discuss here, Google DeepMind, Anthropic, Meta AI Research, and OpenAI, are not an exhaustive list. Microsoft Research and IBM Research, for instance, and a number of academic institutions are contributing serious work, and the open-source and academic communities in particular deserve more credit than they typically receive in discussions of this kind. What I am identifying here are the organizations whose current invention activity seems most consequential for the direction of the field.

Google’s claim on the invention layer is historically exceptional. The Transformer architecture, which is the foundation of virtually every significant large language model in existence today, was developed at Google and published in the landmark 2017 paper “Attention Is All You Need” by eight Google researchers. Google also contributed to foundational techniques in reinforcement learning from human feedback, built some of the earliest large-scale language models, and through DeepMind has produced a sustained record of research achievements, AlphaFold being perhaps the most consequential scientific result produced by any AI laboratory in the past decade. By any measure of invention depth, Google’s position is extraordinary. It is worth noting, however, that all eight authors of the Transformer paper subsequently left Google to join other companies or to found their own startups. While the invention was Google’s, the translation of that invention into the products that defined the current AI era happened largely elsewhere.

DeepMind in particular represents an invention environment in the precise sense developed in Part II: a culture organized around ambitious long-horizon problems, with the psychological safety and organizational patience that genuine research requires. The integration of DeepMind into Google’s broader structure has been a subject of ongoing organizational negotiation, and the tension between a research culture optimized for invention and a product culture optimized for deployment is, in DeepMind’s case, not a failure of either side but an expression of the structural incompatibility and complexity discussed in the previous posts.

Anthropic occupy a different position. Its founding is itself a translation event of a special kind: in 2021, seven researchers left OpenAI concluding that its organizational conditions were not compatible with the kind of safety-focused, long-horizon AI research they believed the historical moment required, and they built a new institution around that conviction. Anthropic is simultaneously an invention organization, its alignment and interpretability research represents some of the most serious work being done on the hardest problems in AI, and a rapidly growing commercial enterprise. The tension between those two identities is real and acknowledged: in an interview on the Dwarkesh Podcast in February 2026, Amodei described the commercial pressure as “incredible,” while also arguing that safety discipline and capability are not in opposition. Whether that argument holds as the company scales, and whether the tension ultimately proves a strength or a structural risk, are among the more consequential open questions in the current AI landscape.

Meta AI Research, known as FAIR, has been one of the most significant open research organizations in AI for more than ten years. Under LeCun’s leadership it produced foundational work in self-supervised learning and convolutional neural networks, among other important contributions. Most recently, the LLaMA family of open-weight models have become the foundation of a large part of the open-source AI ecosystem. FAIR’s commitment to publishing openly and releasing model weights democratized AI research: it gave universities, startups, and independent researchers access to capable foundation models they could never have built themselves, and seeded an entire ecosystem that would otherwise have had no starting point. The fact that LeCun left Meta to found AMI is, through the framework’s lens, a significant signal. In mid-2025 Meta restructured its AI divisions, subordinating FAIR’s long-horizon research program to a new unit focused on competing commercially with OpenAI and Google. Multiple former employees reported that FAIR had been dying a slow death as Meta prioritized commercially focused teams over long-term research. LeCun’s response was to leave. In an interview with WIRED, he said that Meta’s push to catch up with the industry on large language models was not his interest, and that he told Zuckerberg he could pursue the research he believed mattered faster and better outside Meta. One of the architects of FAIR’s invention culture had concluded that the organizational conditions at a large platform company were no longer compatible with the kind of long-horizon fundamental research he believed the moment required. We have seen that pattern before.

OpenAI started as a genuine research organization, and its early work was real. GPT-3, published in 2020, was a significant research achievement. ChatGPT, launched in November 2022 and built on GPT-3.5, was OpenAI’s translation of that research into a product that the world could use. OpenAI’s path since ChatGPT is a familiar one in the history of technology. Commercial success generates pressure to deliver more products faster, and under that pressure research tends to lose ground. The invention layer gradually gives way to translation and deployment. Several of OpenAI’s most prominent researchers have departed, citing concerns about the balance between research depth and commercial speed. OpenAI still does research, and some of it is serious. But the center of gravity has shifted, and the organization that produced GPT-3 as a research contribution is not quite the same organization that exists today. That is not a criticism. It is what the framework would expect.

Not everyone, however, believes the current dominant LLM paradigm is heading in the right direction. In March 2026, Yann LeCun, Turing Award winner, long-serving chief AI scientist at Meta, and one of the architects of modern deep learning, launched AMI (Advanced Machine Intelligence), a Paris-based research startup that closed a $1.03 billion seed round at a $3.5 billion valuation. The raise is notable not primarily for its size but for what it represents: a serious, well-credentialed bet that the current dominant paradigm is built on the wrong foundation. LeCun’s argument, developed over years of public commentary and research, is that large language models are linguistically fluent but lack genuine world understanding, that they model language about reality rather than reality itself. I find this argument compelling: the gap between linguistic fluency and genuine understanding is one I have observed repeatedly at the boundary between AI research and its application, and it is not a gap that more data or larger models alone seem likely to close. AMI’s research program centers on world models, AI systems that learn from sensory and physical data rather than text, built around an architecture called JEPA that LeCun developed at Meta. The company’s CEO, Alexandre LeBrun, told TechCrunch directly: AMI is ‘not your typical applied AI startup’ that ships a product in months.

The debate over the limitations of the current LLM paradigm, and whether JEPA world models will eventually supersede it, is genuinely open. What the framework raises, regardless of how that debate resolves, is a structural question about AMI’s organizational conditions. Invention environments require insulation from commercial pressure to function, and the history of great research organizations suggests that insulation is most durable when it comes from structural conditions rather than from the patience of investors. Whether a $1 billion seed round, at a valuation established before a single product exists, creates the right conditions for the kind of long-horizon invention LeCun and the other founders are attempting is an open question. The capital is extraordinary, and so is the team: LeCun is one of the three scientists whose foundational work made modern AI possible, and the researchers he has assembled around him carry some of the deepest credentials in the field. However, history teaches us that the organizational conditions that capital and talent create are not necessarily the ones that produce the most consequential innovation and disruption. And beyond the organizational question, AMI faces a steep technical one: to disrupt the current paradigm, world models will need to prove superiority over LLMs in at least one fundamental dimension, such as accuracy, cost, generalization, or speed, and then demonstrate the ability to scale. This is made harder by the fact that LLMs are not standing still. They continue to improve rapidly, raising the bar that any alternative paradigm must clear. The scientific challenge is formidable enough on its own, but the organizational and competitive ones may prove equally demanding, even for a team of this caliber.

There is also a second-order phenomenon worth noting. In an interview with TechCrunch, Alexandre LeBrun predicted that world models would become the next buzzword, and that within months every company would adopt the label to attract funding. He said this candidly, acknowledging that AMI itself would not be immune to the dynamic he was predicting. If that prediction proves accurate, and the history of AI is full of precedents for it, from expert systems in the 1980s to big data in the 2010s to deep learning after 2012, it would represent a recurrence of the domain construction problem described in Part I: a new conceptual frame attracting organizations that use its terminology without the depth it demands, slowing the assembly of the domain rather than accelerating it. The translation gap does not only appear between invention and deployment. It appears, sometimes, between a genuine research agenda and the industry that forms around its terminology.

The Translation Layer: Who Is Asking the Right Question

The most consequential translation act in recent AI history was not a research breakthrough. It was a product decision. When OpenAI released ChatGPT in November 2022, it was not introducing a fundamentally new architecture or a novel training approach. It was asking, and answering, a specific question: what does a large language model feel like to a person sitting at a keyboard who simply wants something done? That perceptual shift, from what a model can do in a benchmark to what it feels like to use, is the translation act in the sense developed in Part II.

OpenAI’s trajectory illustrates both the power and the fragility of an organization built primarily, and often only, around translation. The translation instinct is genuine and has been consistently demonstrated: each major product release has been less a research announcement than a reframing of what the underlying capability is for. But translation organizations depend on continued access to invention, either their own or borrowed, and on deployment infrastructure at scale. OpenAI has had to build or acquire both under significant competitive pressure and organizational complexity. The tension between its original research mission and its commercial imperatives is exactly the tension the framework would predict: translation organizations that grow rapidly must eventually choose, more or less consciously, which phase of the arc they are primarily optimizing for.

Microsoft’s relationship with OpenAI, from the framework’s perspective, is one of the more structurally interesting arrangements in the current AI landscape. It is, in effect, an externalized solution to the translation problem. Microsoft under Satya Nadella rebuilt its translation capacity after the stack-ranking era described in Part II, but recognized that rebuilding the invention layer from scratch would take too long. In January 2023, Microsoft committed up to $10 billion to OpenAI in a multiyear partnership that made Azure OpenAI’s exclusive cloud provider. The arrangement gives Microsoft access to translation and some degree of invention, while Microsoft provides what it has in abundance: deployment infrastructure, enterprise relationships, and distribution reach. It mirrors, in a distributed form, what AT&T’s vertically integrated structure provided Bell Labs: a path from research to deployment that does not require a single organization to be excellent at all three phases simultaneously.

Apple’s position in the current AI landscape is harder to read through the framework. Apple has historically been one of the most disciplined translation organizations in the technology industry. Its pattern, i.e. wait until a technology domain is mature enough to deliver a seamless, integrated experience, then enter with force, has produced some of the most consequential product innovations of the past half-century. Tim Cook has acknowledged this approach explicitly, noting that Apple has rarely been first to a new technology, pointing to the PC before the Mac, the smartphone before the iPhone, the tablet before the iPad, and the MP3 player before the iPod, and arguing that Apple’s advantage lies not in arriving first but in arriving better. But that strategy is now under visible pressure. Apple’s major AI initiative, Apple Intelligence, launched in 2024 with significant delays to its most important features, and the upgraded Siri at its center has been pushed to 2026. A telling signal of this pressure came in December 2025, when Apple replaced its longtime AI chief John Giannandrea with Amar Subramanya, a sixteen-year Google veteran who ended his tenure there as head of engineering for the Gemini Assistant, and who incidentally was my colleague when I was at Google. His arrival coincided with Apple’s announcement of a reported $1 billion per year deal to license Gemini to power Siri. The company that built its reputation on owning its technology stack end to end had concluded that building its own foundation models from scratch would take too long to remain competitive. The question the framework raises is not whether Apple can translate AI effectively when it chooses to, but whether the pace of AI development leaves room for the kind of patient observation that Apple’s historical approach requires. That question does not yet have an answer.

The Enterprise Ecosystem: Translation Without Invention

The framework developed in this series of posts was built largely from historical cases: Bell Labs, Xerox PARC, IBM, Nokia, Kodak. In each case, the organizations involved had genuine invention capacity, they had built or possessed something real, even when they failed to translate or deploy it effectively. The current enterprise AI ecosystem presents a different and in some ways more challenging situation: a large and growing number of organizations attempting to perform translation and deployment against an invention layer they do not possess and cannot build.

I have spent considerable time in this space, working with and within enterprise AI organizations, and what I observe is a structural problem that the framework makes visible. The foundation models produced by the research laboratories are accessible via API. The tooling, orchestration frameworks, vector databases, and deployment infrastructure are available as commodities. The barrier to assembling an AI-powered enterprise product has never been lower. And that accessibility, which is in many respects a genuine democratization of powerful technology, creates a specific competitive problem: when everyone is assembling the same components, differentiation cannot come from the components. It can only come from translation, from a genuine answer to the question of what this specific capability is for, in this specific organizational context, for a specific user.

Translation, in the precise sense this essay uses the term, requires domain depth: understanding the invention well enough to see not only what it can do but also what it cannot do, where its limits lie, and what those limits mean for a real user in a real situation. This kind of knowledge comes from years of working at the boundary between AI research and its application. It is not available in a vendor’s documentation or a conference presentation, and it cannot be acquired quickly. It is also not enough to assemble a team of excellent software engineers led by an equally excellent engineering manager, or to appoint a product manager whose experience lies elsewhere. Neither profile, however capable, has spent the years at that boundary that translation demands. The generation of leaders now building enterprise AI products was largely trained in enterprise software, cloud services, or digital transformation, disciplines with their own rigors and legitimate expertise, but not disciplines that produce deep familiarity with AI’s specific capabilities and failure modes. That familiarity is not widely distributed in the enterprise AI leadership community today, and its absence is the single most consistent explanation for why so many enterprise AI efforts produce demonstrations that impress but deployments that disappoint.

The result is a pattern that the framework would predict, and that I have watched play out repeatedly in the enterprise space: organizations attempting to perform translation, building demos, writing use cases, packaging APIs into products, without the underlying perceptual act that distinguishes translation from mere assembly. When the components are the same and the translation capacity is shallow, products converge. Differentiation migrates to pricing, to go-to-market execution, to bombastic and often hollow buzzwords, to strategic partnership arrangements. These are real competitive levers, but they are not durable ones. Durable differentiation comes from knowing, earlier and more specifically than competitors, what a given capability is ready to become for a given user. That knowledge is the scarcest resource in the current enterprise AI ecosystem, and the one least visible in a funding pitch or a product roadmap.

There is a further dimension worth naming carefully. The domain gaps identified in Part I, reliability and evaluation infrastructure, memory and grounding, integration standards, accountability frameworks, human-AI collaboration models, are experienced most acutely not in research laboratories but in enterprise deployment. It is in the enterprise context that the absence of reliable evaluation methodology becomes a practical problem, that the lack of integration standards becomes a daily engineering burden, that the accountability gap becomes a legal and reputational risk. The enterprise ecosystem is, in this sense, the environment where the consequences of the incomplete domain are felt most immediately and most concretely.

This creates a paradox. The organizations that feel the domain gaps most directly are often the ones least positioned to address them, because addressing them requires the invention depth they lack. The organizations with the invention depth are often insulated from the enterprise deployment reality by the distance existing between a research laboratory and a production system. The domain construction work that Part I argued is the most consequential work in the current AI era, the patient, unglamorous building of the infrastructure that makes AI reliably deployable, falls into the gap between these two worlds.

Three Structural Tensions Worth Naming

The framework does not resolve the current AI landscape into a clear picture of who is well-positioned and who is not. What it does is make certain structural tensions visible that are worth naming honestly.

The Cannibalization Problem

Google faces a structural tension that has appeared repeatedly in the history of technology. One of the most powerful applications of large language models is conversational search, which threatens, or at minimum substantially disrupts, the web search business that generates most of Google’s revenue and funds its research. This is not about Google’s leadership or strategy choices. It is an observation about organizational dynamics that the framework’s historical cases make familiar: Kodak faced it with the digital camera, Nokia with the smartphone, Xerox with the personal computer. In each case, cannibalization fear was one of the most powerful inhibitors of translation. Google’s situation is structurally identical. Whether its leadership can navigate that tension more successfully than its predecessors is an open question. What history tells us is that this tension is real, structural, and not easily overcome regardless of the quality of the leadership involved.

The Publication Paradox

The open research culture that characterized AI development for most of the past decade, in which major architectural advances were published, shared at conferences, and rapidly built upon by the broader community, accelerated the field’s progress in ways that are difficult to overstate. It also created a specific dynamic that the framework illuminates: inventions were available for translation by whoever moved fastest, not by whoever invented them. The Transformer is the clearest example, but not the only one. As AI becomes more commercially consequential, the incentives to publish openly are in tension with the incentives to translate quickly. The shift toward less open publication is already visible and well documented. OpenAI, which began as an organization committed to publishing its research openly, now withholds critical technical details about its most capable models. A 2025 transparency index published by researchers from Stanford, Berkeley, Princeton and MIT found that industry-wide transparency has declined since 2024, with the entire field systematically opaque about training data, training compute, and societal impact. The shift is a rational commercial response to competitive pressure. Whether it is good for the field’s long-term domain construction is a separate question, and not one that has a clean answer.

The Domain Completion Question

The five gaps identified in Part I, reliability and evaluation, memory and grounding, integration standards, accountability frameworks, human-AI collaboration models, each require a different organizational type to close. Reliability and evaluation infrastructure requires the kind of patient, rigorous work that invention environments do well. Integration standards require industry-wide coordination that no single organization can provide unilaterally. Accountability frameworks require engagement with legal, regulatory, and professional institutions that technology organizations are not well-equipped to navigate alone. Human-AI collaboration models will emerge, as Part I argued, from the accumulated experience of deployment at scale, from the enterprise ecosystem, not the research lab.

What this means is that the domain completion work is inherently distributed. No single organization, not Google, not OpenAI, not Anthropic, not Microsoft, is structurally positioned to close all five gaps. The work requires something closer to the collaborative infrastructure that W. Brian Arthur describes as characteristic of mature technological domains: a network of organizations, institutions, and practices that together constitute the domain, without any single actor controlling the whole. That network does not yet exist for AI. Building it, or recognizing when it is being built, piece by piece, in places that do not attract headlines, is the work the framework points toward.

The emergence of autonomous agents sharpens this point considerably. The most-discussed open-source project of early 2026 was OpenClaw, a local autonomous agent that connects large language models to email, calendars, messaging platforms, and file systems, acting on behalf of users without waiting to be prompted. Its rapid adoption, over 300,000 GitHub stars within five months of its November 2025 launch, reflects genuine demand for AI that completes tasks rather than merely discusses them. But OpenClaw also illustrates, with unusual clarity, which domain gaps remain open. Accountability frameworks do not yet exist for agents that act beyond their user’s explicit intent. Evaluation infrastructure cannot yet reliably distinguish a well-configured agent from a misconfigured one. Integration standards are absent, which is why the security vulnerabilities discovered in third-party OpenClaw skills were predictable in retrospect: there was no vetting infrastructure to prevent them. The agents are moving faster than the domain that would make them safe to deploy. That is not a criticism of the technology. It is a precise description of where domain construction work is most urgently needed.

The Bridge Under Construction

The title of Part II of this series was “The Bridge That Was Never Built.” The historical cases, Xerox PARC, Nokia, Kodak, IBM, were cases of bridges that were never started, or started and abandoned, or built on the wrong foundations. The current AI landscape presents a different picture, and in some respects a more hopeful one: the bridge is being built, by multiple organizations, with genuine seriousness and significant resources.

But it is being built unevenly. The invention layer is strong and concentrated. The translation layer is active and consequential, though organizationally fragile in some of its most visible expressions. The deployment layer, where the domain gaps are felt most directly and where the patient infrastructure work must eventually happen, is crowded with organizations attempting translation without the depth it requires, and underserved by the domain expertise that would make their efforts more productive.

I have spent most of my career at the boundary between what AI can do and what people actually need from it. It is an uncomfortable place to be, close enough to the research to know what is real and what is overstated, and close enough to the application to know how much remains unsolved. What I have learned is that the most consequential work rarely attracts the most attention. The headlines follow the model releases. The domain construction happens in the gaps between them.

The most important AI work of the next decade may be exactly the kind that is hardest to celebrate: patient, distributed, technically demanding, and aimed not at what AI can do in a benchmark but at what it can reliably do for a person who simply needs something done.

Leave a comment