Why the Real AI Revolution Hasn’t Happened Yet.

This is the first of three posts on innovation: what it actually is, how it differs from invention, and how technologies evolve through the construction of domains, translation into products, and the specific kind of leadership each phase requires.

The thing everyone is calling the AI revolution, ChatGPT, Claude, Gemini, the Large Language Models (LLMs) model race, autonomous agents, may not be the revolution at all. It may be the precondition for one.

That is a disorienting claim, given how much has visibly changed in the past ten or so years. But there is a body of thought, largely outside the AI field itself, and predating the recent popularity of AI, that suggests we are misreading the moment. We are celebrating the spark when what matters is the system that makes the spark reliable, scalable, and safe enough to hand to the world.

The distinction is not pedantic. Imprecision about where we are in the AI story has real consequences: for how we invest, for what we build, and for what we systematically neglect. The field has a long history of technical brilliance outrunning genuine usefulness, and of mistaking the former for the latter.

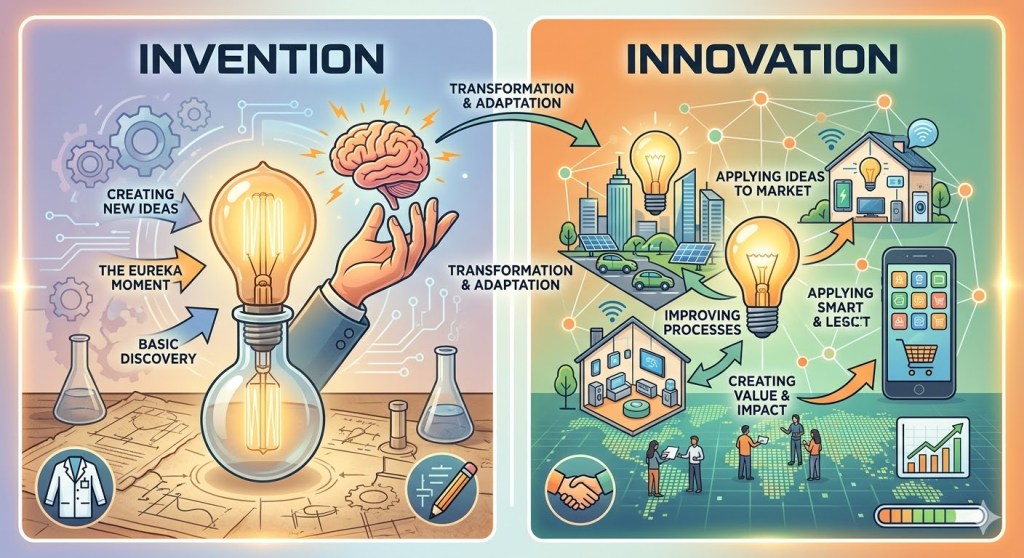

Invention and Innovation Are Not the Same Thing

Invention is the creation of something genuinely new: a concept, a technique, an architecture that did not exist before. Innovation is the application of something, new or existing, in a way that creates value for real people in the real world. The two are not the same thing, and conflating them has been one of the most persistent and costly errors in technology development generally, and in AI specifically.

The iPhone did not invent the touchscreen, the mobile phone, or the mp3 player. It combined them into a seamless experience that transformed how billions of people communicate and navigate the world. Thomas Edison understood the distinction instinctively. He did not merely invent the incandescent light bulb. Scores of inventors had worked on electric lighting before him. What he built was the entire system that made the bulb worth anything: the generators, the distribution infrastructure, the wiring standards, the utility business model. The bulb was the invention. The electrification of cities was the innovation. Edison was unusual in grasping that one without the other was commercially worthless, which is why he founded a laboratory explicitly organized around turning inventions into deployable systems, not just ideas.

This maps onto a parallel distinction in technology development: the difference between technology innovation and product innovation. Technology innovation advances what is technically possible. Product innovation takes whatever is available and shapes it into something people actually want to use. The AI field has historically been far better at the former than the latter. Better accuracy, faster algorithms, more sophisticated architectures: the technical metrics kept improving while the gap between what a system could do and what a person actually needed it to do remained stubbornly wide. Virtual assistants are the clearest example. As I argued in a recent post, The Rise and Fall of Virtual Assistants, the technology behind Siri, Alexa, and Google Assistant improved steadily for over a decade. Adoption did not. The gap was not technical.

While for the virtual assistants the gap was mostly between the technological advancement and the actual user needs (what in commercial terms gets called lack of product-market fit, but is in fact something deeper), in the most general case, that gap is not primarily a technical problem. It is a domain problem. And understanding what that means requires a framework that most AI discourse has not yet absorbed.

Arthur’s Deeper Frame: Technologies as Combinatorial Domains

A few years ago I happened to read W. Brian Arthur’s fascinating 2009 book The Nature of Technology. It provides a deep understanding of how technologies are born, how they evolve, and why some transform civilization while others quietly disappear. But more importantly, it reframes invention and innovation at a more fundamental level. Arthur argues that most of what we call invention is actually combinatorial. New technologies are built by combining existing ones in novel ways, typically to exploit a natural phenomenon that was previously unharnessed or underexploited. This is a direct challenge to the romantic notion of invention as a singular flash of genius creating something from nothing.

Arthur’s most powerful concept is the domain: a cluster of technologies and practices built around a core principle or set of phenomena, which together form a self-contained, productive infrastructure that practitioners can draw upon to solve problems. A domain is not just an industry or a field. It is something more structured: a system of knowledge, tools, and practices that cohere around a central principle, with its own internal logic, language, standards, and combinatorial power. Digital electronics is a domain. Chemistry as the basis for pharmaceuticals is a domain. The collection of practices around combustion engineering is a domain.

When a domain is complete, the technology becomes reliably exploitable at scale by non-specialists. Electrical engineers today don’t need to understand Maxwell’s equations to wire a building. The underlying science has been absorbed into the domain, and the domain has been absorbed into practice.

Arthur also argues that technologies evolve by solving the internal problems they create. Each breakthrough reveals new needs and gaps, which become the seedbed for the next wave of innovation. This is recursive and organic, not the linear pipeline from lab to market that most business thinking assumes.

Three Examples That Sharpen the Point

There are at least three examples in the history of technology, cited by Arthur, that sharpen the concept of domain.

The jet engine. The thermodynamic phenomena underlying jet propulsion were understood long before jet engines flew reliably. Building the domain required constructing an entire surrounding infrastructure: metallurgy for turbine blades that could withstand extreme temperatures, fuel systems, aerodynamic theory, maintenance practices, certification regimes. And once deployed, the technology revealed new internal problems (noise, fuel efficiency, reliability at altitude) which drove further innovation. The invention of the jet engine was not a moment. It was a process of domain construction.

The shipping container. Malcolm McLean’s standardized container in the 1950s was almost trivially simple as a technology: a steel box with standardized dimensions. But building the domain around it required renegotiating port labor agreements, redesigning ships and cranes, harmonizing international standards, restructuring customs processes, and reorganizing global supply chains. Almost none of that was technical invention. It was systems integration and standardization. And it reshaped global trade more profoundly than most celebrated technology breakthroughs of the same era.

The printing press. Gutenberg combined existing technologies (the screw press, oil-based inks, movable type) in a new configuration. But the press did not just produce books. It revealed the need for standardized spelling, punctuation conventions, copyright concepts, distribution networks, and literacy infrastructure. It created the conditions for its own domain to emerge, and that domain eventually produced the Reformation, modern science, and the nation-state. The gap between the technical innovation and the full civilizational impact was over a century. This parallel is especially useful for AI because it separates two things we habitually conflate: the moment a technology becomes powerful enough to be destabilizing, and the moment it becomes powerful enough to be transformative at civilizational scale.

The AI Story, Told Through Arthur’s Lens

The deep mathematical phenomena underlying modern AI, backpropagation, gradient descent, and the attention mechanisms, were known for a while, decades in certain cases, before they became useful at scale. What changed around 2012 was not the phenomena but the assembly of a supporting domain: GPU compute that made training tractable, massive labeled datasets, cloud infrastructure, and software frameworks that made experimentation fast and cheap. AlexNet is often cited as the invention moment for deep learning. Indeed, in 2012, it won the ImageNet Large Scale Visual Recognition Challenge by a margin so large it shocked the computer vision community. However, in Arthur’s terms it was the moment the domain became sufficiently assembled to make the underlying phenomena exploitable.

Since then, AI has followed Arthur’s recursive pattern almost mechanically. Better image recognition revealed the inadequacy of sequential data modeling, which led to attention mechanisms. Attention mechanisms revealed the inefficiency of recurrent architectures, which led to the Transformer. Transformers revealed the value of scale, which led to large language models. LLMs revealed the gap between language fluency and genuine task completion, a gap the field had been circling for decades in the context of dialogue systems and virtual assistants, which led to instruction tuning, reinforcement learning from human feedback, and agent frameworks. Agent frameworks revealed reliability, grounding, and memory problems that remain largely unsolved today.

Each solution opened a new problem space. The technology has been extraordinarily productive in exactly Arthur’s sense. But productivity is not the same as completeness.

The printing press parallel is worth dwelling on. Gutenberg’s press was printing books in the 1450s. The Reformation began in 1517. The scientific revolution gathered force over the following century. The institutional and epistemic structures that printing made possible took generations to fully emerge, not because the technology was slow to spread, but because the domain around it, and the human adaptations to it, took time to assemble. We are probably closer to 1460 than to 1550 in the AI story, though the clock runs considerably faster now. The destabilization has begun. The transformation is still ahead.

What Is Actually Missing

The incompleteness of AI’s domain is not a vague intuition. It is identifiable and concrete, and it manifests in at least five distinct ways.

Reliability and evaluation infrastructure. In mature engineering domains, there are established methods for specifying what a system should do, verifying that it does it, and certifying it for deployment. A pharmaceutical must pass through defined phases of clinical trials, each with accepted statistical methodologies, before it reaches a patient. A commercial aircraft must satisfy thousands of pages of airworthiness criteria, tested under standardized conditions, before it carries a single passenger. The methods are not perfect, but they are shared, stable, and trusted enough to underwrite deployment at scale. AI has none of that in any rigorous sense. Evaluation benchmarks are routinely gamed or saturated. There is no agreed methodology for testing an LLM-based system the way you would test a bridge or a pharmaceutical. Deploying AI in high-stakes settings currently requires heroic amounts of human oversight and custom engineering, the signature of an incomplete domain.”

Memory and grounding. Current AI systems are largely stateless in a deep sense. Context windows are a workaround, not a solution. AI systems also struggle to reliably connect language to the current state of the world, to know what is true now, what has changed, what is specific to this context versus what is generic. Retrieval-Augmented Generation (RAG), the technique of supplementing a model’s responses with documents retrieved from an external knowledge base, is the most widely adopted attempt to address the grounding problem. It helps, but it is a patch on an architecture that was not designed for grounding from the ground up. RAG can retrieve relevant documents; it cannot reliably reason about their currency, reconcile contradictions between them, or know when retrieved information does not actually answer the question being asked. These are not exotic research problems. They are the difference between a system that impresses in a demo and a system that can be trusted to act consequentially on someone’s behalf.

Integration standards. When deploying an AI agent inside a real enterprise workflow, organizations face a combinatorial explosion of integration challenges: authentication, permissions, data formats, API heterogeneity, audit logging, error handling. There is no mature, standardized way to embed an AI agent into an organization’s systems. Anthropic’s Model Context Protocol (MCP) is a promising recent attempt to address part of this problem, providing a common interface through which AI agents can connect to external tools and data sources. But MCP addresses the connection layer, not the full integration problem. It does not resolve questions of access control, audit accountability, data governance, error recovery, or the organizational and legal responsibilities that arise when an AI agent acts inside a consequential workflow. It is a foundation, not a solution. Each deployment remains largely a custom engineering project built on top of whatever standards exist. Until a more comprehensive integration infrastructure stabilizes, enterprise AI deployment will remain expensive, fragile, and accessible mainly to organizations with substantial engineering resources.

Trust and accountability frameworks. In mature technology domains, legal, regulatory, and professional frameworks allocate responsibility when things go wrong. These frameworks are almost entirely absent for AI. But the gap goes beyond legislation and liability. Mature technologies also earn what might be called social legitimacy: a broad, tacit acceptance by the public, by professionals, and by institutions that the technology is trustworthy enough to be relied upon in consequential situations. We trust a bridge because civil engineering has centuries of accumulated standards, failures, and corrections behind it. We trust a prescribed drug because a known process of trials, review, and post-market surveillance stands behind the prescription. AI has not yet earned that legitimacy. There are no broadly accepted professional norms for AI practitioners, no equivalent of medical ethics or engineering codes of conduct, no public understanding of what responsible AI deployment looks like. This is not just a legal technicality. It is part of the domain infrastructure that makes a technology broadly deployable in regulated industries, and it is built as much through social trust as through formal rules. Healthcare, finance, legal services, education: the sectors where AI could deliver the most value are precisely the sectors where this combination of missing accountability and absent social legitimacy most severely constrains adoption.

Human-AI collaboration models. Perhaps most fundamentally, stable and well-understood models for how humans and AI systems should divide cognitive labor do not yet exist. When should a human defer to the AI? When should the AI escalate to the human? What level of autonomy is appropriate in which contexts? These questions are being answered ad hoc today, differently in every organization and product. In a mature domain, these patterns get standardized into roles, workflows, and professional norms. Commercial aviation is the clearest example: decades of experience with autopilot systems have produced precise, codified protocols for when pilots engage automation, when they override it, when the system alerts the human, and who bears responsibility at every stage. Every airline, every regulator, and every pilot training program operates from the same shared model of human-machine collaboration. No equivalent exists for AI. That standardization has not happened yet.

Arthur Is Not Alone

Arthur’s framework gains force when read alongside other thinkers who have studied how technologies mature and create value.

Carlota Perez, in Technological Revolutions and Financial Capital, argues that major technology waves, from the industrial revolution of the 1760s to the age of information and telecommunications of the 1970s and after, unfold in two main phases: an installation period, where the technology and its infrastructure are built out amid speculative frenzy, followed by a deployment period, where the technology diffuses through the economy and generates the bulk of its social and economic value. By Perez’s analysis, AI is clearly still in the installation period. The deployment period, with its much larger and more evenly distributed value creation, lies ahead.

Clayton Christensen, in The Innovator’s Dilemma, adds a competitive dynamics layer that Arthur doesn’t address. Incumbents consistently fail to capitalize on the domain-construction phase because they optimize for existing customers rather than investing in the infrastructure that would serve new markets. This pattern is already visible in AI: the most consequential domain-construction work is often happening quietly, in organizations that generate few headlines.

Joseph Schumpeter, whose distinction between invention and innovation in his 1911 book The Theory of Economic Development started this conversation, would note that creative destruction requires completed domains to fully operate. You cannot disrupt an industry with a technology that practitioners cannot yet reliably deploy. The disruption waits for the domain

Where the Value Actually Lives

The practical implication is not pessimistic: it is clarifying. The next wave of AI value will come less from the next model release and more from whoever closes the domain gaps: building reliable evaluation infrastructure, solving memory and grounding, establishing integration standards, creating accountability frameworks, and defining stable human-AI collaboration models.

This work is not underinvested in absolute terms. Significant capital is flowing into research on the problems the domain lacks. When Turing Award winner Yann LeCun can raise $1.03 billion in seed funding for AMI Labs, a company less than three months old working on world models as an alternative approach to grounding and real-world understanding, the field clearly recognizes what is missing. But that investment is directed at invention: searching for new architectural approaches to unsolved problems. The unglamorous infrastructure work (evaluation standards, integration protocols, accountability frameworks) attracts far less, because it produces no compelling demos and no proprietary competitive advantage. AMI’s own CEO acknowledged the company may take years to move from fundamental research to commercial applications. The investment follows the spark, not the system. And yet it is almost always the system where durable value accumulates, the way TCP/IP became the condition for everything that followed on the internet, regardless of who built the best browser in 1995.

One limit of this argument deserves acknowledgment. The incompleteness described here is largely a story about institutional deployment. But civilizational transformations often begin at the margins, in informal and unregulated contexts, long before institutions catch up. The printing press did not wait for copyright law. The internet did not wait for cybersecurity frameworks. A margin-first AI transformation may already be underway in ways that the enterprise adoption story does not capture. The domain may be incomplete and the revolution already beginning. Both things can be true.

But even granting that, the direction of domain construction still matters. More robust evaluation, better grounding, clearer accountability, and more standardized integration will increase the value AI can deliver regardless of whether they fully close every gap. And the work of building these things is, today, the most undervalued work in the field.

Conclusion: The Revolution Is the System

The jet engine was not the revolution. Commercial aviation was. The lithium-ion battery was not the revolution. The electrification of transport is. The Transformer architecture is not the revolution. Whatever emerges when AI’s domain is complete, when it becomes reliably deployable across the full range of human activity by people who are not AI specialists, that will be the revolution.

The language capability of current AI systems is genuinely extraordinary, and it would be a mistake to minimize what has been achieved. But the gap between impressive capability and reliable, trustworthy usefulness has not closed. It has, in some ways, become more visible: the capability is now compelling enough that the deployment failures are harder to explain away.

We are living through the spark. The system is still being built. And the most consequential work of the AI era may be the least celebrated: the patient, difficult, necessary construction of the domain that makes everything else possible.

In the next post, I look at the organizational and human side of this story: why the bridge between invention and innovation is so rarely built, and what kind of leadership it takes to build it.

Leave a comment